Wladyson Araújo

I'm

About

I am a software engineer focused on backend development and cloud infrastructure, with complementary experience in data and automation. I specialize in building scalable and resilient systems, automating processes, and designing architectures that support business growth while ensuring reliability, security, and performance under varying workloads. With a strong DevOps mindset, I work on creating efficient pipelines, optimizing infrastructure, and delivering solutions that have real impact on business operations.

- Location: Quixadá - CE, Brasil

Contact

- E-mail: wladysonaraujo991@gmail.com

Professional Profiles

Experience and Training

Experience and training in technology, with a focus on systems development, cloud infrastructure, and practical solutions applied in daily operations.

Academic

Bachelor's

Information Systems

Technician in

Computing

Computing in

Cloud Infrastructure (AWS)

Science of

Data and Artificial Intelligence

Information Systems

Centro Universitário Catolica de Quixada, Quixada - CEJan 2024 - Dez 2027

Training focused on developing systems and solutions in information technology. Focus on backend programming, databases, information security, etc. Projects using Python, Java, C#, PostgreSQL, C, etc. Integrative work with agile methodologies such as Scrum and Kanban. Advanced studies in algorithms, data structures, networks, and software engineering. Practical applications in digital security, artificial intelligence, and financial systems. Participation in academic projects focused on scalable and secure applications. Experience in collaborative environments and use of version control with Git and GitHub.

Data Science and Artificial Intelligence

Universidade Estadual do Ceara, Fortaleza - CEJan 2025 - Jan 2026

Intensive training focused on Data Science and AI, promoted by MCTI with certification issued by the State University of Ceará (UECE). The course covered everything from fundamentals to advanced applications in data science. I deepened my knowledge and developed practical projects integrating modern techniques applied to real-world problems.

Computing in Cloud Infrastructure

Escola da Nuvem, São Paulo - SPMai 2025 - Nov 2025

Technical training focused on cloud solution architecture with AWS services. Work with EC2, S3, RDS, IAM, Lambda, SNS, VPC, and CloudFormation in real-world environments. Applying best practices for security and resilience in scalable infrastructures, creating pipelines, CI/CD with GitHub Actions and CodePipeline for automation, provisioning with Terraform and environment control through infrastructure as code, monitoring with CloudWatch and log integration with VPC, Flow Logs, and CloudTrail. Focus on DevOps practices, cloud governance, and event-driven architecture.

Computer Technician

EEP Clemente Olintho, Baturité - CEJan 2021 - Dez 2023

Technical training focused on systems development, support, and IT infrastructure. Creation of desktop and web applications using Java, C#, HTML, CSS, and JavaScript. Introduction to relational databases with MySQL and the use of queries and modeling, practical classes in assembling, maintaining, formatting, and configuring computers, knowledge of TCP/IP networks, cabling, and configuration of routers and switches, and the use of programming logic, conditional structures, loops, and object-oriented programming.

Certifications

- Cyber Threat Management – Instituto Militar de Engenharia | 2025

- Oracle OCI Foundations - Oracle | 2025

- Oracle OCI DevOps Professional - Oracle | 2025

- Intermediate English for IT Professionals – Dell Technologies | 2025

- Apex Development and Oracle Database - Instituto Serzedello Corrêa | 2025

- Advanced Java Programming – Instituto Federal do Rio Grande do Sul (IFRS) | 2024

- Database Administrator – Instituto Federal do Rio Grande do Sul (IFRS) | 2024

- Database: Oracle PL/SQL – Instituto Federal do Rio Grande do Sul (IFRS) | 2024

- Power BI Discovery - Treinamento Microsoft - Comunidade Data Driver | 2024

Digital Badges

Training

Professional Experience

Back-end Developer

Pactus Acessoria em Gestão PublicaJan - 2026 - Atual | Fortaleza - CE

- I work in backend development of web applications and mobile apps for healthcare management, developing intuitive tools that ensure agility in decision-making and excellence in service.

- I participate in the construction and evolution of APIs and services responsible for supporting critical workflows, such as appointment scheduling, notifications, and clinical data processing.

- I develop solutions that optimize decision-making and improve operational efficiency, ensuring greater speed and quality in serving users.

Developer and Data Scientist

Reis Marcas e PatentesMai - 2025 - Jan - 2026 | Fortaleza - CE

- I worked on developing a data analysis system and predictive dashboard for a business management consulting firm specializing in trademarks and patents, with a focus on optimizing operational processes and identifying business patterns.

- The project aims to optimize operational flows and identify business patterns using automated data pipelines and machine learning models.

- The project involved data ETL, predictive modeling (Regression, XGBoost), and customer/process clustering to generate insights into seasonality, conversion rates, and internal bottlenecks.

- Automation and optimization of pipelines, ensuring consistency, scalability, and performance in data processing.

- The system allows you to view conversion rates, average lead times, process status, and customer clusters, providing insights for data-driven strategic decisions, thus optimizing the team's time.

Back-end Developer

Laboratorio de Inovação Digital em Tecnologia e Sistemas de InformaçãoAbr - 2025 - Dez - 2025 | Quixada - CE

- I worked in back-end development, creating and integrating APIs to connect different applications and enable fast and secure data exchange.

- I developed functionalities using languages such as Java, JavaScript, C++, and C#, applying object-oriented concepts along with web development methodologies, good programming practices, and clean architecture. I worked on implementing authentication, ensuring secure access to systems, as well as applying techniques to prevent SQL Injection and other vulnerabilities.

Back-end Developer

Suprema ADMIN - AutônomoNov - 2024 - Out - 2025 | Quixada - CE

- I worked on the development of an automated system focused on data management in the healthcare field, specifically for clinics. Created for high-speed data processing , it interprets completely unstructured files such as PDFs, spreadsheets, patient records, medical reports, manual forms, and others. It organizes the information and generates detailed reports with appointment times, precise observations, and data ready to be sent for analysis and information validation.

- The system goes beyond organization; it automatically enters data into other official systems, recognizing data from spreadsheets and accurately filling in fields in other systems automatically. In cases of inconsistency, the system issues alerts, prevents the sending of incorrect data, and highlights the cell in red, automatically moving on to the next item.

- The system recorded more than 12,526 automatically typed procedures in just 5 days,

a process that previously took an average of 45 to 50 working days manually, considering the volume and complexity of the tasks.

Today, all of this workload is handled automatically.

Project Available: Click Here

Data Analyst

Visão Connect ClinicaMar - 2025 | Mai 2026

- Responsible for the architecture and management of data pipelines that process multiple millions of public contracts (municipal, state, and federal), ensuring high scalability, data integrity, and information security.

- I implemented automations that reduced operational time by approximately 90%, automating tasks that previously required a team of about 10 employees.

Junior Data Analyst

Visão Connect ClinicaFev - 2024 | Mar - 2025

- I worked in the administrative area focusing on data management and analysis in healthcare, using Python to automate tasks and process information.

- I organized and digitized patient records that were previously kept on paper, eliminating manual processes and reducing the time spent filling out paperwork.

- I worked daily with large volumes of data, using Excel, Power BI, and other tools for processing, cross-referencing, and analysis.

- I automated manual processes, improving the organization of information and contributing to data security and integrity.

IT Technician

Visao Connect CLinicaJul 2023 - Dez 2023

- I worked in technical support, providing on-site assistance to users, focusing on speed, efficiency, and accurate problem resolution.

- Performed preventive and corrective maintenance on hardware, identifying recurring performance failures caused by faulty RAM modules. After diagnosis, replaced the memory and thoroughly cleaned the equipment, finishing with the reinstallation and configuration of the necessary software to ensure the system functioned properly.

Behavioral Competence

- Continuous Learning.

- Problem Solving.

- Teamwork.

- Proactivity, Adaptability and Resilience.

- Critical Thinking.

- Time Management, Goal Orientation.

Languages

- English – Advanced

- Spanish – Advanced

Portfolio

Technologies and methods used in my work for productivity and development of scalable environments.

- All

- Cloud Computing

- Developer

- Database

Technical Competence

Contribution to the Community

Cloud Computing e Cloud Architecture

- Development of a repository with the purpose of documenting and organizing all the practical labs carried out throughout my training in Cloud Computing with a focus on AWS. In total, the repository brings together (70 labs), with 40 official labs executed during the training, accompanied by 30 extra labs developed by me to deepen technical learning and apply more advanced scenarios . The content is distributed across three levels of complexity 10 basic, 10 intermediate, and 10 advanced in addition to an exclusive section with professional labs aimed at those who wish to go beyond the fundamentals, simulating real and demanding cloud production environments.

Data Science & Artificial Intelligence

- Development of a repository to document and organize all practical labs conducted throughout my training and studies in Data Science and Artificial Intelligence. All labs were carefully documented, containing detailed descriptions, commented source code, datasets used, evaluation metrics, printouts and graphical visualizations, data processing pipelines, hyperparameter tuning, and practical simulations based on real-world market problems The topics covered are vast, ranging from preprocessing, algorithms, Python programming, statistical fundamentals, database fundamentals, data structures, data visualization, data mining, modeling, programming applied to Artificial Intelligence, text processing, supervised and unsupervised learning, deep learning and exploratory data analysis, feature engineering, prompt engineering, to the construction, training, validation, and deployment of AI models. It also includes content on natural language processing (NLP), computer vision, reinforcement learning, and the integration of models with APIs and web applications.

Java Development

- This repository was developed to document and organize all practical Java labs, covering everything from language fundamentals to advanced projects. Each lab contains commented source code, a detailed description, its purpose, and how it works, with compilation and execution instructions. Topics covered include basic syntax, variables, operators, control structures, object-oriented programming with classes, inheritance, polymorphism, interfaces and abstract classes, data structures such as lists, queues, stacks, and maps with trees, file manipulation and serialization, exception handling, generics, the use of streams and the Collections API, along with concurrent programming and multithreading for database integration via JDBC.

Research and Scientific Article

Research and Study in Computer Vision and Natural Language Processing with Artificial Intelligence

Research and Study in Information Systems and Technology Management

Research and Study in Information Systems and Technology Management

Additional Extras

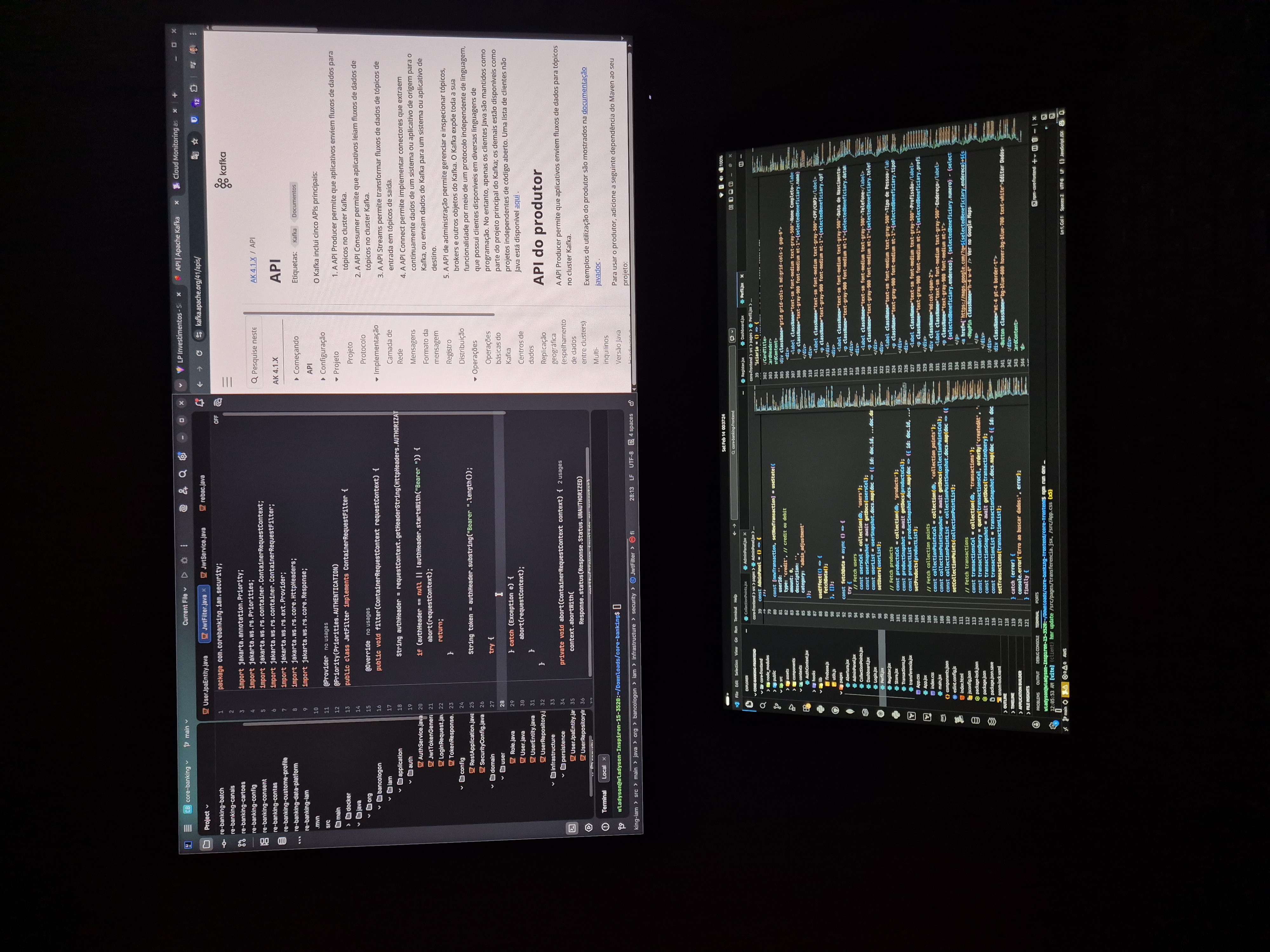

Financial Platform

-

The project consists of building several microservices responsible for account management, financial transactions, payments, treasury, accounting ledger, auditing, reconciliation, risk, scoring, digital channels, notifications, identity and security (IAM/KYC), orchestration of financial processes, integration with external systems, batch processing, and operational back office, among others. The solution operates on a Java architecture with asynchronous processing and communication via Kafka, ensuring consistency with the SAGA standard and immutable event logging for regulatory purposes. The infrastructure is built with Terraform, using EKS, RDS, VPC, Vault, CI/CD with GitHub Actions and GitOps with ArgoCD, as well as observability with Prometheus, Grafana, and Alertmanager. In the initial phase, the environment's foundations and the accounts module are implemented, including complete CRUD and transactional integration with PostgreSQL. Following this, the transactions module is built, responsible for PIX, TED, and DOC transfers, using events in Kafka and guaranteeing exactly-once processing. Next, the card and reconciliation modules are developed, with daily processing routines and real-time workflows. The final phase includes the immutable audit system, performance optimizations, and resilience testing with controlled failures in Kubernetes, along with the completion of the SLO and SLI dashboards for monitoring the behavior of the microservices. The accounting closing processes perform nightly calculations and aggregations, while the audit trail permanently saves all events to comply with regulatory requirements.

Asynchronous Payment Gateway System

-

This project is designed for high-concurrency, low-latency scenarios, inspired by systems like PIX. The platform receives payment requests and executes processing in a completely asynchronous manner, ensuring logical atomicity and eventual consistency between accounts. The architecture is event-driven and composed of two main microservices: a Gateway Handler, responsible for ingesting, validating, and publishing payment events, and a Transaction Processor, responsible for executing debits and credits. Coordination between services is performed using the SAGA pattern via choreography, allowing for automatic compensation in case of partial failures. Communication between components occurs via Apache Kafka, ensuring reliable delivery, ordering, and fault tolerance during processing. The system runs on Kubernetes, guaranteeing orchestration, scalability, and high availability. The Gateway Handler was developed in Java with Reactive Quarkus, using Mutiny for non-blocking programming, allowing thousands of simultaneous connections with low latency. Critical performance metrics, including P99 latency, are continuously monitored using Prometheus. The persistence layer uses PostgreSQL as the transactional database, provisioned via Terraform, with explicit configuration of isolation levels and lock control to preserve transactional integrity in concurrent scenarios.

Account Reconciliation Engine (Batches and Streams)

-

An application designed to compare large volumes of internal transactions with bank statements, combining batch and real-time processing to ensure balance accuracy and reliable identification of discrepancies. The system performs batch reconciliation through complex jobs implemented in Java with Spring Batch, optimized for reading, processing, and writing large files, including bank statements up to 10 GB. The recurring execution of these processes is orchestrated via Kubernetes CronJobs, ensuring predictability and load isolation. For continuous reconciliations, the engine uses Kafka Streams to process financial events in real time, allowing for immediate reconciliation of transactions, reversals, and corrections as events are published, eliminating the exclusive dependency on daily batch processing. Raw files are stored in AWS S3, ensuring durability and traceability, while consolidated reconciliation results are persisted in AWS Redshift, enabling historical analysis and large-scale analytical queries. The entire infrastructure was provisioned as code with Terraform, ensuring reproducible and consistent environments. The system's observability was structured with Datadog, monitoring worker health, processing throughput, and Kafka queue lag, allowing for proactive detection of bottlenecks and operational failures.

Suprema Admin - Healthcare Administrative Automation System

-

This data automation and processing system is designed for the administrative management of clinics and healthcare institutions. It handles large volumes of unstructured information and integrates it with official systems automatically, accurately, and in an auditable manner. The system ingests and interprets data from completely unstructured sources, including PDFs, spreadsheets, patient records, medical reports, manual forms, and various other reports. From these inputs, the platform extracts, organizes, and structures the information, generating standardized spreadsheets and detailed reports with appointment times, clinical observations, and data ready for analysis and validation, eliminating the need for manual data entry. In addition to data organization, the platform performs complete automation of data entry into official systems, recognizing fields, validating information, and filling out external forms with high precision. In scenarios of data inconsistency or absence, the system issues alerts, blocks the submission of incorrect information, and automatically highlights problematic fields, ensuring compliance and reducing operational errors. The solution also automates the identification of spreadsheets and reports required by different regulatory bodies, extracting relevant information and submitting it according to specific deadlines and formats, eliminating manual processes and email submissions. This workflow ensured standardization, traceability, and continuous regulatory compliance. As an operational result, the system automatically processed 12,526 data entry procedures in just 5 days, a volume that previously required between 45 and 50 working days of manual work by a team of approximately 6 people. Automation drastically reduced processing time and freed up the administrative team for strategic activities.

Limits and Fraud Monitoring API

-

This project focuses on real-time validation and decision-making to prevent fraud and limit overruns during transactions. The goal was to create a high-throughput Java API that, with each received transaction, queries the customer's history and applies complex business rules—such as daily limits and usage frequency—in milliseconds. To ensure fast decisions, Redis (via Caffeine as a local cache + remote Redis) was used to store limits and recent customer data in memory, maintaining response times below 50ms. The Redis cluster was provisioned with Terraform using AWS ElastiCache. Business rules were implemented with Drools, allowing fraud rules to be updated without recompiling the core code. The CI/CD pipeline in GitHub Actions validates and deploys new rules in a Blue/Green environment on Kubernetes, ensuring secure updates. Monitoring was performed using Prometheus and Grafana, creating dashboards that track "Rejected due to Limit" and "Rejected due to Fraud" rates, aligned with business metrics defined according to SRE practices.

Real-Time Credit Limit Management System

-

I implemented the service using Quarkus with reactive endpoints via Mutiny, ensuring fast startup, low memory consumption, and immediate response. This improves pod efficiency in Kubernetes (EKS) and reduces costs. For limit storage, I used Caffeine as a local cache and AWS ElastiCache (Redis) as a distributed cache, applying an advanced cache-aside strategy. Redis was provisioned via Terraform, and the Java service includes fallback and failover in case the cache becomes unavailable. For integrity, I implemented the Two-Phase Commit pattern to maintain strict consistency between the limit service and the debit/credit service, meeting regulatory requirements for data integrity. For performance testing, I ran large-scale load tests with Gatling, integrated into the CI/CD pipeline in GitHub Actions, ensuring that performance issues are detected before production deployment.

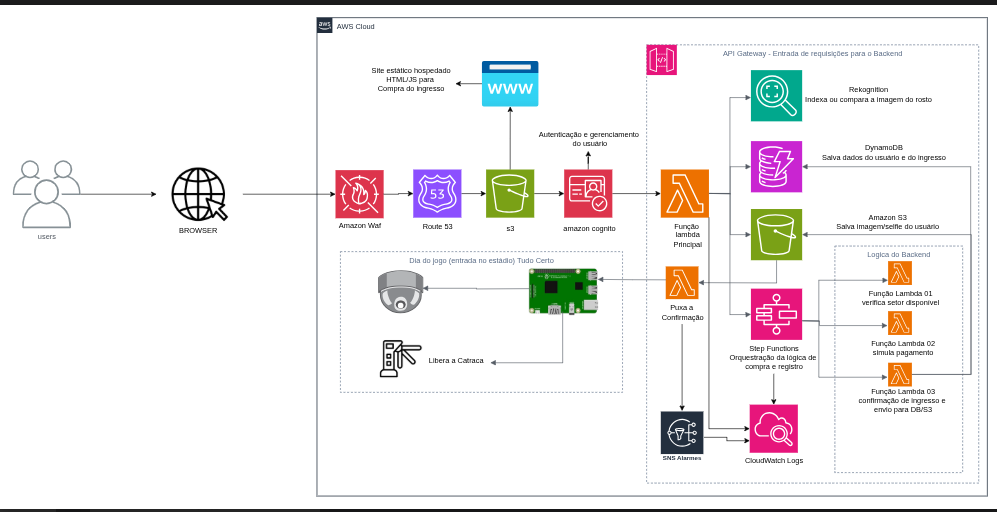

Digital ticketing and sales system

-

This project, a facial recognition identity validation system for Santa Cruz Futebol Clube, complies with the General Sports Law (14.597/2023) and promotes greater security, speed, and comfort for fans. The project integrates advanced AWS technologies such as Amazon Rekognition for facial recognition, DynamoDB for secure data storage, API Gateway, and AWS Lambda for serverless processing, ensuring high scalability and availability exceeding 99% on game days. Focusing on fraud reduction, elimination of ticket scalping, and faster access, the system offers rapid biometric authentication (less than 2 seconds), real-time notifications, and dashboards for access management and auditing. All this is done in full compliance with the LGPD (Brazilian General Data Protection Law), ensuring data encryption and rigorous permission control. I used the Scrum agile methodology to lead the development, implementing infrastructure as code with Terraform, containerization with Docker, and CI/CD pipelines for continuous and secure delivery. The entire scenario was designed exclusively to expedite the arrival of large crowds at stadiums, thus preventing congestion and long waiting times for fans to enter.

Java Development - Rocketseat | Feb/2025 - Jun/2025

- Development of a serverless application with Java and Maven for URL redirection. Integration with AWS S3 was carried out for the creation and management of buckets, exposure of endpoints via API Gateway, use of AWS Lambda for serverless processing, and manipulation of JSON data with Jackson and development of a Java back-end application with Maven using Spring Boot and REST API. Project structuring from data modeling to the implementation of the main functionalities. Integration with the MySQL database using JPA and JDBC. Use of records for data manipulation. Implementation of a referral system with a ranking of users who invited the most people to the event.

NLW Pocket: Mobile - Kotlin - Rocketseat | Dec/2024 - Dec/2024

- Development of a native Android mobile application with Kotlin, Android Studio, MVI + Clean, Architecture, Jetpack Compose, ViewModel and Lifecycle, Navigation, Google Maps API, Flow and Coroutines, Ktor, Kotlinx, Serialization, Coil, Gradle

NLW Pocket: Javascript - Rocketseat | Nov/2024 - Nov/2024

- Development of a back-end application in Node.js, application of REST API concepts, using TypeScript, Fastify as a framework, integration of DrizzleORM + PostgreSQL, Docker and Zod for data validation. Development of a front-end application in ReactJS, application of the concepts of Properties, States and Components, typing with TypeScript, tooling with Vite, responsive interface with TailwindCSS, consumption of Node.js API, asynchronous data management with TanStack Query.

PHP Development – Rocketseat | Oct/2024 – Oct/2024

- Development of a PHP application with Laravel following the MVC architecture, concepts and creation of controllers, models and views to structure our application, SQLite, use of migrations, creation of dummy data with factories and seeders to populate the database, validations and creation of dynamic and reactive interfaces with Livewire.

IA na prática - Rocketseat | Agost/2024 – Agost/2024

- Development of a Python application, integration with the OpenAI GPT model, use of the CrewAI framework, creation of an agent for consuming DuckDuckGo data, creation of a frontend with the StreamLit framework, deployment via StreamLit cloud.

NLW Journey - Java by Rocketseat | Jul/2024 – Jul/2024

- Development of a Java back-end application with Spring Boot using modern tools to ensure efficiency and scalability. This project utilized h2 database, JPA, migrations via Flyway, use of records for data transfers, and Lombok.